It’s no surprise that like all things in 2026, GTC was all about AI. Nvidia showcased their advancements in data center hardware along with the product of their “acquisition” of Groq chips and also an entirely new genre of DLSS.

DLSS 5

With the announcement of DLSS 5, Nvidia wants to bring real-time photorealistic graphics into games.

They created a lighting technique where the new AI model takes the game’s motion vectors and sort of understands how materials like cloth or skin react to real-life light and make an output based on that. In a sense it’s not even graphics rendering, it’s what they call “neural rendering”.

Previous DLSS models upscaled from a lower resolution, added frames in between frames and were mainly intended to improve performance, but this new gen has a different approach, it aims to entirely change how lighting is done. So we are closing in on the day, where some games might be indistinguishable from real life.

Vera Data Center CPU

Nvidia is also coming for the data center CPU space with its brand-new Vera CPU. While we usually associate Nvidia with powerhouse GPUs, Vera is designed to handle the heavy lifting of traditional computing and the “agentic AI” workloads. It’s the successor to the Grace architecture, and the specs look solid: we’re looking at 88 cores per chip and a massive 1.5x boost in performance over the previous generation.

Nvidia is even claiming it’s the fastest single-threaded processor on the market, all while being twice as power-efficient as its x86 co.

Groq 3 LPU

After its acquisition a few months ago, Nvidia already integrated Groq’s tech into their upcoming Vera Rubin platform. By integrating the Groq 3 LPU, Nvidia is diverting away from standard high-bandwidth memory (HBM) in favor of 500 MB of on-chip SRAM.

While HBM is great for capacity, it can be a bottleneck for raw speed; SRAM, on the other hand, allows the LPU to hit a staggering 150 TB/s of bandwidth. It also kindof helps Nvidia bypass current GDDR7 and HBM supply shortages.

The real-world impact of this hardware shift is all about multiplying speed for the next generations of AI. This isn’t only about making ChatGPT faster for humans to read, its about moving the benchmark from 100 tokens per second to over 1,500+ TPS. This is built for multi-agent systems, where AI models talk to other AI models at speeds far beyond human comprehension.

Rubin Ultra tray

They also demonstrated the Rubin Ultra tray, which is a next-generation AI platform scheduled to arrive in 2027. The Rubin Ultra package is the first AI accelerator to feature a massive 1TB of HBM4E memory. And the impressive part is that the tray is designed almost entirely without cables to simplify server assembly. It also can look for up to a 100 Petaflops of FP4 Compute.

The Rubin Ultra chips will be deployed in a new rack-scale system codenamed Kyber which is completely vertical and is liquid cooled by default. And it also integrates 144 GPU packages in a single rack which almost quadruples current gen.

BlueField-4 STX

Nvidia also announced the BlueField-4 STX which is a new storage tier that sits between high-speed GPU memory and persistent storage.

Traditional storage is designed for durability and capacity, but AI agents require rapid access to a KV cache or the “working memory” of a conversation or reasoning session.

As context windows grow to millions of tokens, this cache becomes too large for GPU VRAM but is too slow to retrieve from traditional SSDs, causing “GPU stalls”, which this rack specifically targets, which shows us how entire data-centers are intricately being adapted to modern AI Demands.

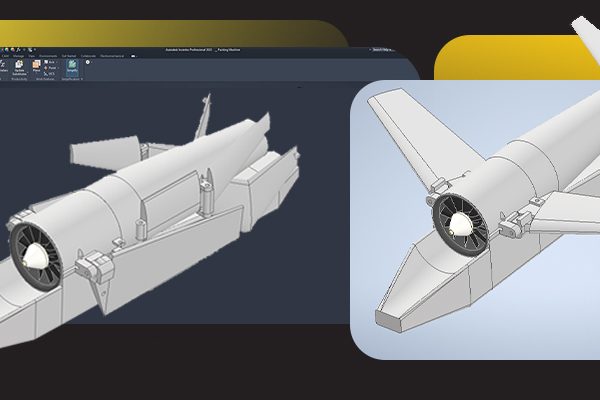

Nvidia Launches Space Computing

Nvidia has also announced a new piece of hardware called the Vera Rubin Space Module, designed specifically to bring high-end AI power into outer space.

A specialized AI SOC built to survive and operate in the harsh environment of orbit.Instead of sending massive amounts of raw data back to Earth to be processed, satellites can now “think” and analyze data right there in space.The extra power helps spacecraft navigate themselves and make quick decisions without waiting for instructions from ground control.

It can process high-resolution satellite images or scientific data in real-time, helping with things like climate monitoring or space exploration.